Using machine learning to evaluate and enhance models of probabilistic inference

Abstract

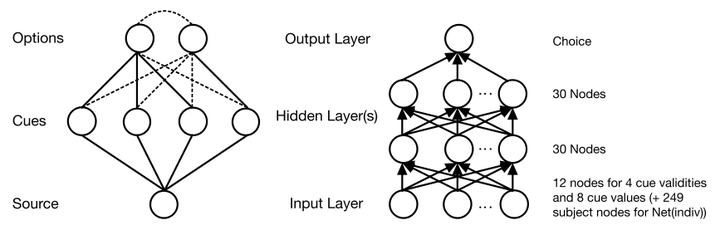

Probabilistic inference constitutes a class of choice tasks in which individuals rely on probabilistic cues to choose the option that is best on a given criterion. We apply a machine learning approach to a dataset of 62,311 choices in randomly generated probabilistic inference tasks to evaluate existing models and identify directions for further improvements. A generic multi-layer neural network, Net(agg), cross-predicts choices with 85.5% accuracy without taking into account interindividual differences. All content models that do not consider interindividual differences perform below this estimate for the maximum level of predictability. The naïve Bayesian model outperforms these models and performs indistinguishably from the benchmark provided by Net(agg). Taking into account all kinds of interindividual differences in the generic network, Net(indiv), increases the upper benchmark of predictive accuracy to 88.9%. The parallel constraint satisfaction model for decision making, PCS-DM(fitted), performs 1% below this benchmark provided by Net(indiv). All other models performed significantly worse. Our analyses imply that in these kinds of tasks, Bayes and to some degree also PCS-DM(fitted), respectively, can hardly be outperformed concerning choice predictions. There is still a need for the development of better process models. Further analyses, for example, show that the predictive accuracy of PCS-DM(fitted) for decision time (r = .71) and confidence (r = .60) could potentially be further improved. We conclude with a discussion on the potential of machine learning as a valuable tool for evaluating and enhancing models and theory.